One Simple Image Shows How on Twitter, Polarization is Built In

What you see on social media versus what your friends, family, and other people see is probably very different. Tech algorithms keep us locked inside filter bubbles, reinforcing our own worldview and beliefs.

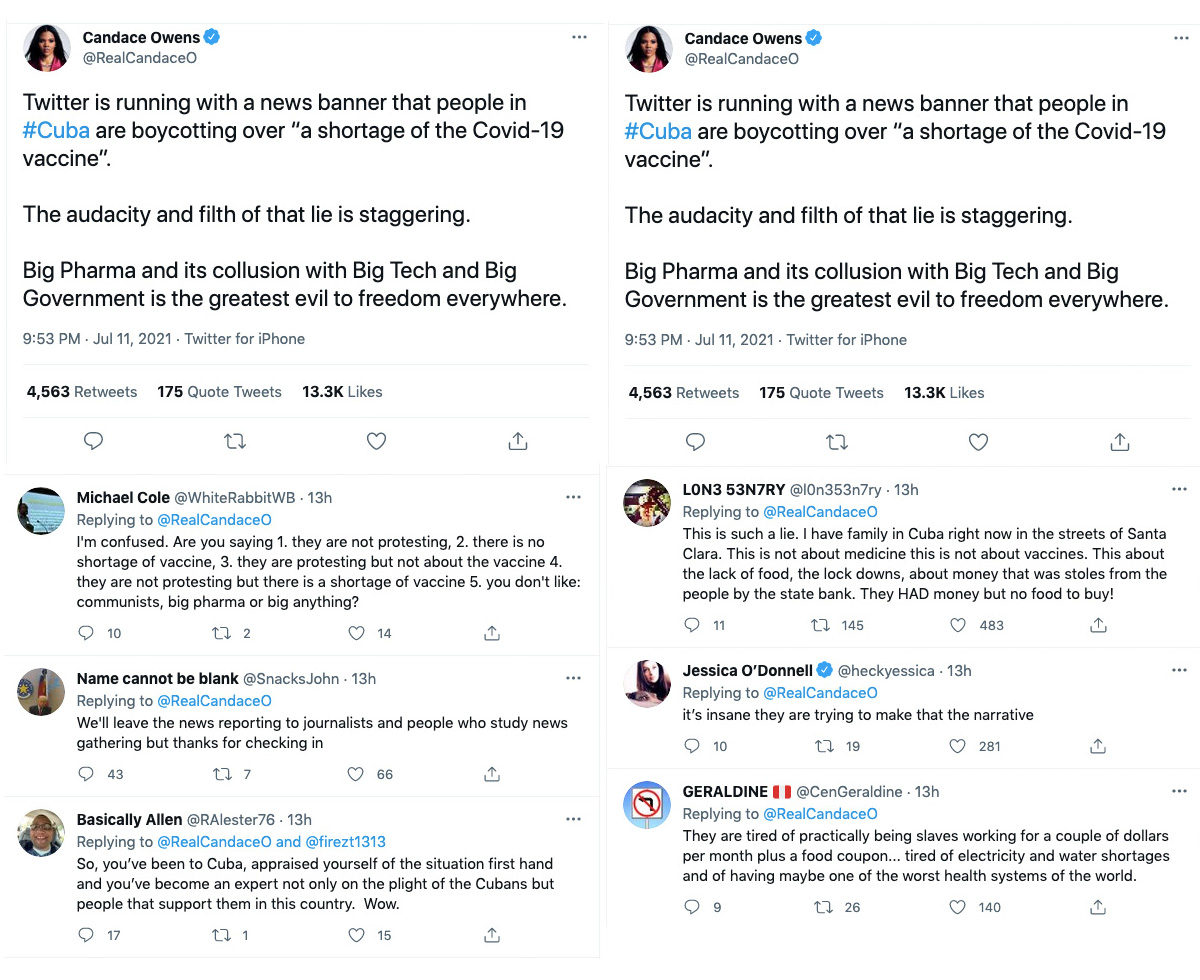

Take a look, for instance, at these two images showing top replies to a tweet by Candace Owens, a conservative commentator, about protests in Cuba, as viewed from two different Twitter accounts.

The image on the left shows the top replies that I saw when logged into a brand account that I help to run.

The image on the right shows the top replies I saw when logged into my personal Twitter account.

Twitter either showed me people agreeing or disagreeing with Candace depending on who was viewing the tweet.

I’m no expert on social media algorithms, but Twitter probably knows I am personally not a fan of communism (to say the least) based on who I follow and interact with, which tweets I like and retweet, keywords I use in my own tweets, etc. So, when I viewed Candace’s tweet from my personal account, they showed me replies from others who have a similar worldview.

When viewing her tweet from the brand account, however, I saw something very different — people skeptical of Candace’s take.

Now imagine this happening to every single one of us using social media every single day. What I see and what you see are very different — meaning we’re all living in filter bubbles. We see opinions and information that are tailored to us and reinforce our own beliefs, and rarely see content that strays outside of that. When we log into social media, we can enjoy the reassurance of confirmation bias, never having our existing beliefs challenged — unless we actively seek out opposing perspectives.

It’s no secret that social media companies like Facebook, Twitter and Instagram use algorithms to tailor content to us. Our newsfeeds look very different depending on what the algorithm knows about us — are we conservative or liberal? Do we like dogs, architecture, celebrities, sports, activism, guns, something else?

What you click on, share and interact with helps technology companies to get a sense of who you are, what you like, and what you’re interested in, and then tailor content accordingly.

This isn’t wholly a bad thing — after all, the sheer volume of information out there means we absolutely need a filter to narrow it down and save ourselves time. For instance, I’m particularly interested in using Instagram to see images of classical architecture, vintage fashion, and dance. If every time I logged on, I had to dig and dig for this content because Instagram was instead showing me videos of celebrities, baking, and rugby, I’d find the platform useless and not come back.

So it’s not bad that a filter exists. The problem is when we are unaware of the filter and when it is applied to politics and news in a one-sided way.

It is not good to never be exposed to alternative viewpoints. Those who have escaped authoritarian regimes with heavy information control talk about how they had no idea alternative systems of government even existed. In the U.S. at least, alternative viewpoints and information are still largely available — but major technology companies don’t help us to find it. If we don’t have a curiosity to dig beyond what people on “our side” are already saying, we will miss out on important perspectives and information.

Being inside a filter bubble is comfortable, because it’s easier for us to digest viewpoints we already agree with. But when it comes to politics and news, it’s good to actively seek out the other side and not rely on social media to do it for us. If not, we may feel self-assured about our views, when really there are perspectives and information out there that would better inform us or even change our views entirely.

This is essentially why AllSides exists — we’re a technology company that exposes you to different perspectives. While no filter can show you everything that’s put there, using tools like AllSides can help you to actively seek alternative views. In a world of one-sided information, it’s crucially important.

Julie Mastrine is the Director of Marketing at AllSides. She has a Lean Right bias.

This piece was reviewed by Managing Editor Henry A. Brechter (Center bias).

April 19th, 2024

April 19th, 2024

April 18th, 2024

April 17th, 2024